Back to Blogs

Back to BlogsData Then vs Now: The Shift from Data Warehouses to Streaming Data

Data analytics has changed shape three times. From Stored Truth to Moving Signals

Not in theory. In the way it moves, where it lives, and how we treat it.

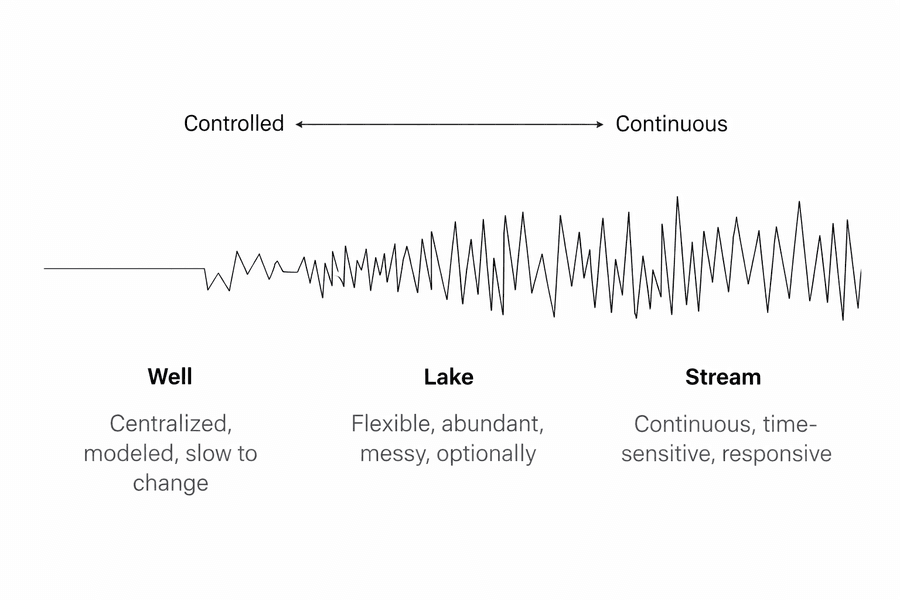

A simple mental model we use for this article:

- Data used to be a well.

- Then it became a lake.

- Now it behaves like streams.

Each era comes with its own “treatment plant”; its own ETL-style flow, expectations, and failure modes.

1) The Well Era (Past): scarce, centralized, controlled

A well is deep, narrow, and intentional.

You don’t pull water unless you have a reason.

You don’t let everyone build their own plumbing.

Where data lived

- Relational databases, data warehouses

- Carefully modeled schemas

- Central BI team as gatekeeper

Typical treatment (ETL flow)

- Extract from operational systems (ERP/CRM)

- Transform into strict business models (star schemas, dimensions)

- Load into a warehouse where queries are “safe”

Strengths

- High trust and consistency

- Clear definitions and governance

Trade-offs

- Slow to change

- Data becomes “official” only after it’s fully processed

2) The Lake Era (Present-ish): abundant, flexible, messy

A lake is wide and full of water from many sources.

You can drop in logs, events, files, tables — and decide later what it means.

Where data lived

- Object storage + lakehouse patterns

- Semi-structured data (JSON, parquet)

- Many producers, many consumers

Typical treatment (ELT-leaning flow)

- Extract everything (raw ingestion)

- Load into a lake (raw, bronze)

- Transform downstream into curated layers (silver/gold)

Strengths

- Fast ingestion, flexible exploration

- Supports many analytics use cases without upfront modeling

Tradeoff

- Without discipline, you get a swamp:

3) The Stream Era (Now): continuous, time-sensitive, always in motion

A stream is not stored first and analyzed later.

It’s processed as it flows.

Latency becomes part of the product.

Where data lived

- Event pipelines, streaming platforms

- Real-time feature stores, operational analytics

- Metrics and monitoring become first-class citizens

Typical treatment (Streaming ETL)

- Capture events as they happen (CDC / event tracking)

- Validate and enrich in motion (schemas, contracts, joins)

- Route to multiple sinks:

Strengths

- Real-time feedback loops (fraud, personalization, monitoring)

- Closer alignment between data and product behavior

Tradeoff

- Harder debugging (time windows, out-of-order events)

- Governance must shift “left” (contracts at the source)

- Observability becomes mandatory, not optional

The quiet point

These aren’t replacements. They stack.

Most mature systems are hybrid:

- Streams for immediacy

- Lakes for breadth and history

- Wells (warehouses/models) for trusted business truth

The question isn’t “which one is best?”

It’s: What kind of water are we dealing with; and how quickly do we need it to be safe to drink?

Latest Articles

-300x195.png&w=3840&q=75)

The Intelligence Stack at Leren Labs: How We Make Learning Actually Work

There’s a lot of talk about AI, tools, and the future of work. Every week, it feels like a new platform, a new model, or a new promise appears. But wh...

Introducing the Leren Labs Product Suite

At Leren Labs, we believe that technology should do more than function, it should adapt, scale, and create measurable impact. As a Learning Lab focuse...

7 Ways Procurement Teams Save Money with AI Driven Spend Analysis

Procurement used to be simple: get three quotes, pick the cheapest one, and file the paperwork.

Want to read more insights?

Subscribe to our newsletter and get the latest articles, updates, and industry insights delivered straight to your inbox.